On "FiRe": Trality's New Financial Reinforcement Learning Repository

FEDERICO CORNALBA

29 March 2022 • 7 min read

Table of contents

In the previous episode…

In our initial piece on the subject, we spelled out the basics of the so-called Reinforcement Learning (RL) paradigm, and we hinted at the impact that embracing such a paradigm could have on our fintech company. A summary of the main points that we made—laid out as a mock interview—is as follows.

- What is RL? RL is a subset of Machine Learning, which is devoted to studying problems that are intrinsically framed in terms of dynamic, multi-step decision-making processes. The goal is for the machine to learn to take suitable actions based on the current environment state in order to maximize a cumulative gain (measured in terms of a pre-specified reward mechanism).

- Is RL just one thing? Not at all. Depending on the specific instance, one can pick the most appropriate RL declination (usually referred to as RL agent) in a vast spectrum ranging from thorough environment exploration techniques (i.e., critic-based approach) all the way to direct decision-making training schemes (i.e., agent-based approach).

- What are the simplest applications of RL one can think of? Multi-step games, such as Atari 2600, are ideal candidates, as they fit the RL framework by design. But, crucially for us, financial applications are good candidates, too (see the next point).

- What’s up with Trality and RL? A trading bot, by definition, is an automated rule that considers the state of the financial market at a given time and takes actions (on behalf of an investor) in order to maximize a given reward mechanism. Consequently, conceptualizing a bot can definitely be done in the RL framework! As our core mission is to put our users in the best possible position to be able to create, deploy, and share successful trading bots, we believe that providing comprehensive RL tools would be advantageous to our users as it would—metaphorically speaking—add a few more useful tricks up their sleeves.

Introducing FiRe

In accordance with the vision summarized in point 4 above, we have been developing our own open source repository devoted to including existing (and building up new) Reinforcement Learning tools for stock and cryptocurrency trading. And ... here it is:

https://github.com/trality/fire

The core features to which we are committed are interpretability, extremely intuitive UI, modular code structure, and accessible reproducibility.

Currently, the tools available in our repository are associated with a critic-only Deep Q-Learning RL agent with Hindsight Experience Replay for both single- and multiple-reward learning with generalization (no need to grasp everything now; there's more on this below).

Our plan is for the FiRe repository to be constantly expanded (by Trality as well as by the broader community). In the long run, we hope that FiRe will become a solid and trusted reference, one that bot creators can use to build their bots comfortably and reliably.

What this piece is about

Well, pretty straightforward:

We provide the minimal necessary context which is required to navigate the structure of – and use the existing tools in – our FiRe repository.

A "crash-course" on the currently implemented RL agent

Our current RL agent is a critic-only Deep Q-Learning agent with Hindsight Experience Replay for both single- and multiple-reward learning with generalization. Even though the full agent description goes beyond the scope of this piece, we put together the essential components.

- What's the agent after? The agent seeks to maximize the cumulative reward that it can collect in its exploration of a given single-asset financial environment.

- Single- vs. Multi-Reward. The agent can be trained on one or more reward mechanisms. Generally speaking, the agent is fed a weight vector via which the individual rewards are accounted for.

- What's the agent made of? Among other things, our agent includes a Neural Network that maps the current state of the environment to all the "estimated" cumulative rewards that the algorithm could achieve by: i) taking any given, admissible action as next move, and ii) operating in the best possible way thereafter. Point i) is what the terminology "critic-only" refers to.

- How is the agent trained? The agent keeps storing "past events" in a so-called Hindsight Experience Replay. Whenever training occurs, the agent's Neural Network is updated via the Bellman equation, which in turn operates on a randomly sampled batch from the Hindsight Experience Replay. Specific details on the Bellman equation update are not given here, as this is not the main point of this discussion.

- How long does the agent's training last? The agent runs through the environment several times, or episodes (in a way that is not dissimilar to exhausting several rounds of a video game).

- What's the Hindsight Experience Replay specifically made of? The agent memorizes all past experiences as tuples of the form

tuple = [

starting environment state,

action taken,

next environment state visited,

weights for the reward vector,

scalar reward obtained by weighting the reward vector

]Getting Started with FiRe

Cloning. The FiRe repository can be cloned from GitHub by running

$ git clone https://github.com/trality/fire.gitChange to the FiRe directory. Simply run

$ cd fireChecking your python version. You'll need python 3.8.10. You can check your current python version by typing

$ python3 --versionActivating virtual environment. Once we have cloned FiRe, we recommend setting up a virtual environment. This can be done by running

$ python3 -m venv .venv

$ source .venv/bin/activatein the repository's main folder.

Finishing the setup. You can install all relevant packages and download the exact same BTCUSD-hour dataset we have used for some of our simulations by running

$ make From Start to Finish: Walking You through an Experiment

Setting up an experiment. Now that we have the repository installed, and we know what our RL agent does in a nutshell, we can substantiate more specific aspects on the code by spanning through a complete dummy experiment.

An experiment is created after specifying the chosen dataset, the agent Neural Network's hyper-parameters, the chosen reward(s), and all relevant Deep Q-Learning parameters. This information is specified in a .json file, which looks something like

{

"dataset": {

"path": "datasets/crypto_datasets/btc_h.csv",

# ...

# addition fields

# ...

},

"model": {

"epochs_for_Q_Learning_fit": "auto",

"batch_size_for_learning": 2048,

# ...

# additional fields

# ...

},

"rewards": ["LR", "SR", "ALR", "POWC"],

"window_size": 24,

"frequency_q_learning": 1000,

"Q_learning_iterations": 500,

"discount_factor_Q_learning": 0.9,

# ...

# additional fields

# ...

}The entirety of fields in the .json is thoroughly explained in the FiRe repository. Just to mention a few: "path" indicates the chosen dataset; "Q_learning_iterations" is the number of episodes; and "rewards" contains all the considered rewards. The ones that we have used so far (all appearing above) are

- "LR" (LastReturn): self-explanatory!

- "SR" (SharpeRatio): computed over a lookback of fixed length.

- "ALR" (AverageLastReturn): average of LR over the same lookback.

- "POWC" (ProfitOnlyWhenClosingPosition): feedback given only when Long/Short positions are closed.

Running the experiment. Once the example.json file is ready, simply run

$ python3 main.py example.json

to start the experiment.

Outputs of the experiment. In addition to saving all agent's features throughout the execution (in particular, the agent's Neural Network), relevant plots summarizing the agent's performance are produced.

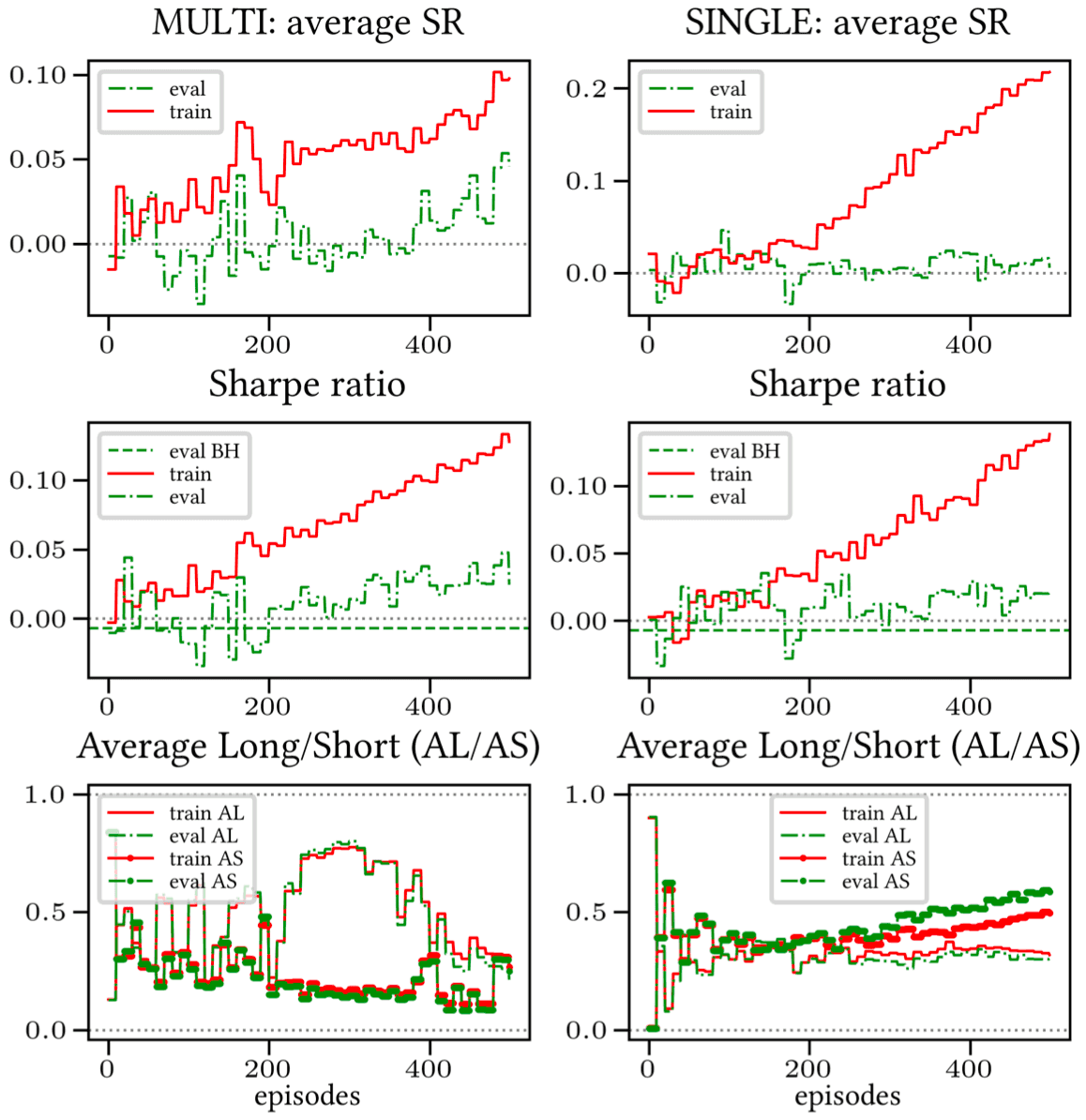

The first type of plots show a given cumulative reward obtained by the agent on train/evaluation sets as the episodes progress, see Figure 1.

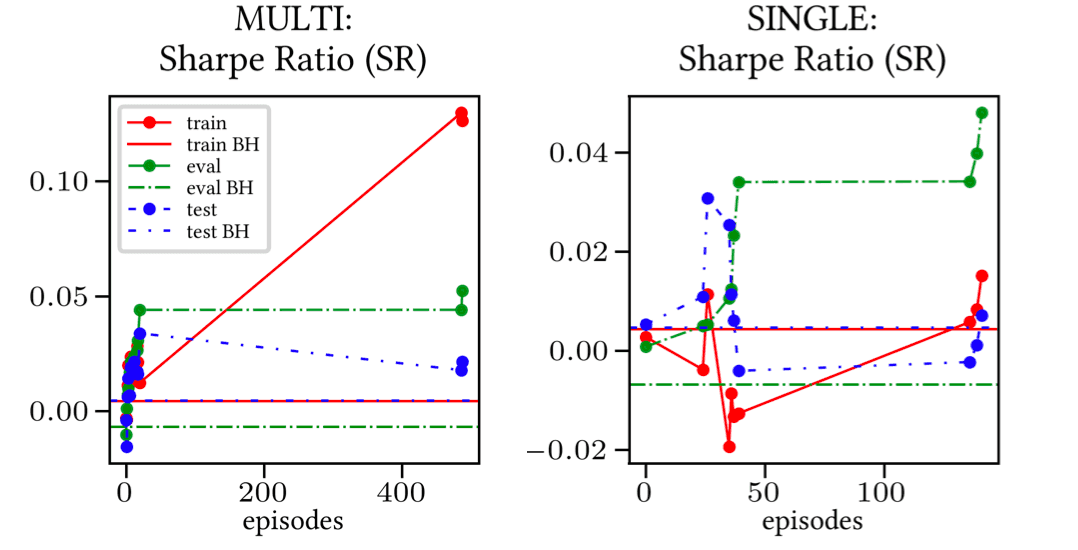

The second type of plots show, for any given episode, the Sharpe-Ratio based on the best performing model on the evaluation set up that very episode. These plots are used as statistically significant indicators in the comparison of multi- and single-reward simulations. See Figure 2.

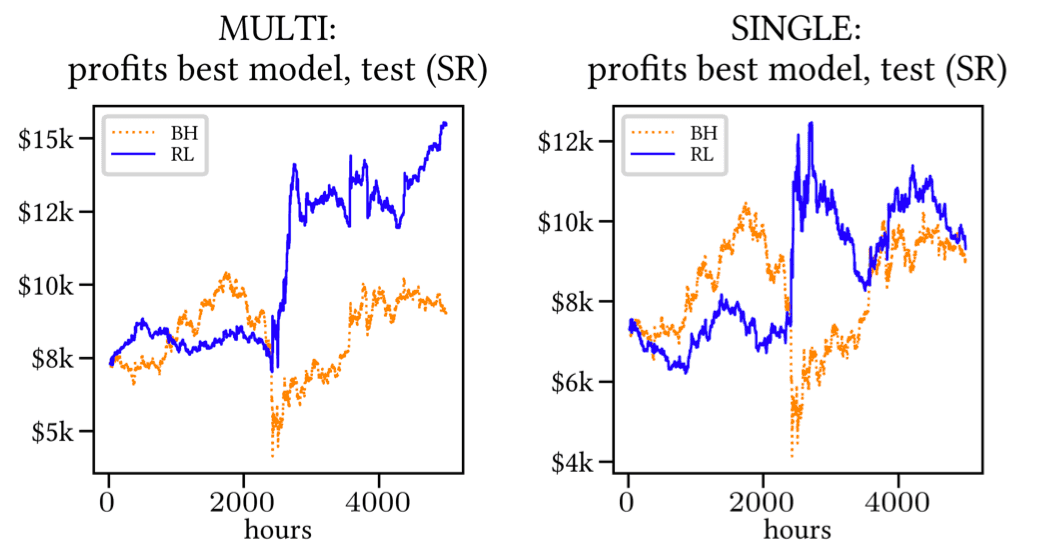

The third type of plots show the overall profit (on the test set) associated with the best performing model on the evaluation set mentioned above. See Figure 3.

Answering your questions

If you are new to our repository (or the RL in general), we appreciate that you might have a lot of questions! In trying to provide clarifications that are as clear as possible, we've put ourselves in your shoes and come up with the most important questions (in our opinion), which are listed below. Should some of your questions not be listed, feel free to get in touch with us on Discord.

- Will Multi-Asset RL trading be included in the future? Yes, definitely.

- Will Actor- and Actor/Critic-based agents be included in the future? Yes, definitely.

- Can I try out your RL code with my own Single-Asset dataset? Of course, you will need to load your dataset as a .csv file.

- Can I actively contribute to the repository? Yes! We look forward to your PRs!

- What measures did you take towards code efficiency? We relied on several measures, including: i) vectorized evaluation of each possible agent's action in the model's neural network (this is possible as the state and action spaces are small) and ii) use of randomly sampled portions of the training set.

Milestones coming up next

All of us at Trality have worked towards setting up the foundations of a repository, one that we would like to see grow and eventually become a strong and consolidated RL library for the benefit of our bot creators.

At the same time, we also believe that allowing open access to this repository is crucial towards giving members of the financial and data science community the opportunity to work, improve, and enrich the RL tools therein. We cannot wait to see what the community has to contribute to this cause!

In this long-term effort, we believe that the closest upcoming milestones on our side (to be reasonably achieved in the next 6 months) will be the following:

- Incorporate RL tools for Multi-Asset trading;

- Include Actor- and hybrid Actor/Critic-based RL agents.

Acknowledging sources. A selected number of features in our code take inspiration from two open source repositories: the gym-anytrading stocks environment and a minimal Deep Q-Learning implementation.

We hope you enjoyed reading this piece, and we would love for you to be involved in open source contributions to the FiRe repo. We look forward to answering questions that you may have.

Stay tuned for our next RL blog piece!